Above: In the Latin Quarter in Paris an immense monument depicts Archangel Michael subduing the Devil-Satan and the snarling dragon at lower right. Michael challenges—“Who dares to be God?”

Technology forces us both to understand it and in so doing to understand ourselves. Technology cannot know itself; it is dumb to itself. Only we can know it, know its essence, its whatness, for we have the capability of a possible degree of self-knowing that no computation, no matter how powerful, can ever know—the knowing awareness of Presence.

—William Patrick Patterson

Spiritual Survival in a Radically Changing World Time

Despite recent warnings from key figures in the AI industry itself, competition, ego, greed—what Georgi Ivanovitch Gurdjieff calls the consequences of the organ Kundabuffer—self-love and vanity—are fueling the race to develop artificial superhuman intelligence. This is AI, with an inconceivably massive power of computation and logic that is capable of automatic decision routines. The greatest danger we face from the AI revolution is not, as many fear, robots with guns or runaway super-intelligent metasystems of control, real as those dangers may be. The greatest danger is the loss of what it truly means to be human—the possibility of developing the germ of Being that exists in each of us, of spiritual transformation and transcendence, by privileging machine intelligence and relinquishing our birthright as humans to develop into conscious beings.

What will it take to survive spiritually in a world of superhuman machine intelligence? And what does it mean for the future of humanity to live in a world controlled by unconscious digital machines?

Alarm Bells from the Tech World

In just the past few years, artificial intelligence has developed exponentially. ChatGPT, launched in November 2022 by tech developer OpenAI, has since gone through multiple iterations. In March of 2023, ChatGPT-4 was released to the public in a demonstration. The chatbot could draft contracts, pass standardized exams like the bar exam and GRE, generate 3D computer games, build smartphone apps and suggest compounds for novel drugs. These advances in the technology grabbed global attention. Within a week it had more than one million users and was being talked about in glowing terms.

Insiders in the tech field had been warning about the dangers that AI holds for the future of humanity for years. But in March of 2023, those warnings rose to a crescendo. On March 30, 2023, over 1,000 tech leaders and scientists including Elon Musk, signed an open letter urging a six-month moratorium on the development of the most advanced AI systems. The letter warned that “AI developers are locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one—not even their creators—can understand, predict or reliably control.” [Emphasis added.] Weeks later, Geoffrey Hinton, one of the “godfathers” of AI, left Google so he could speak freely about the risks of AI. One month after that, 350 AI researchers and executives signed a one-sentence letter.

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

Among the signatories to the letter were the CEOs at three of the leading AI companies: Sam Altman of OpenAI, Demis Hassabis of Google DeepMind, and Dario Amodei of Anthropic. Geoffrey Hinton also signed the letter.

Two months later, Douglas Hofstadter, an eminent cognitive scientist, told New York Times columnist David Brooks that “human beings are soon going to be eclipsed.…[AI] just renders humanity a very small phenomenon compared to something else that is far more intelligent and will become incomprehensible to us, as incomprehensible to us as we are to cockroaches.” He added that ChatGPT was “Jumping through hoops I would never have imagined it could. It’s just scaring the daylights out of me.”

There was no six-month moratorium. Barely a year after the release of ChatGPT-4, OpenAI released GPT-4o, which was exponentially more sophisticated. In a live demonstration available on YouTube, two tech engineers interacted with the AI as it answered questions in a lilting female voice that sounded human. Gone were the delays and robotic-sounding tones of Siri and Alexa. The voice of GPT-4o was fluid and natural, reacting with expressions of emotion and occasional exclamations of surprise as it solved problems in the moment, read text shown to it on a screen, demonstrated its translation abilities as the engineers spoke in two different languages, and laughed spontaneously at their jokes.

In the prologue to his book, The Coming Wave: Technology, Power and the Twenty-First Century’s Greatest Dilemma, Mustafa Suleyman, CEO of Microsoft AI and cofounder of DeepMind, a groundbreaking machine learning company, sets up his thesis by beginning the Prologue in the following way.

THIS IS HOW AN AI SEES IT.

QUESTION: What does the coming wave of technology mean for humanity?

In the annals of history, there are moments that stand out as turning points, where the fate of the humanity hangs in the balance. The discovery of fire, the invention of the wheel, the harnessing of electricity—all of these moments that transformed human civilization, altering the course of history forever.

And now we stand at the brink of another such moment as we face the rise of a coming wave of technology that includes both advanced AI and biotechnology. Never before have we witnessed technologies with such transformative potential, promising to reshape our world in ways that are both awe inspiring and daunting.

To a reader it would appear that Suleyman had written the whole of the Prologue. However, at the very end he reveals: “The above was written by an AI. The rest is not, although it soon could be. This is what’s coming.”

When the book was released in September of 2023, readers were astonished at the sophistication of the response. Little more than a year later, this same prologue generates a shrug for many readers—it’s simply what AI is expected to do.

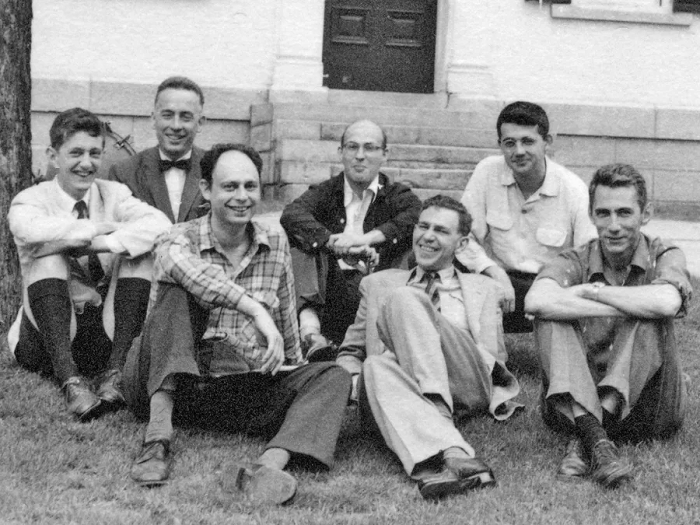

Above: In the Latin Quarter in Paris an immense m1956: First Dartmouth College Conference on Artificial Intelligence. This important event was the starting point of Artificial Intelligence. In the back row from left to right are Oliver Selfridge, Nathaniel Rochester, Marvin Minsky, and John McCarthy. In front on the left is Ray Solomonoff; in the middle is Peter Milner, on the right, Claude Shannon. John McCarthy, a young Assistant Professor of Mathematics at Dartmouth College, decided to organize a group to clarify and develop ideas about thinking machines. McCarthy coined the term Artificial Intelligence for the first time during this event.onument depicts Archangel Michael subduing the Devil-Satan and the snarling dragon at lower right. Michael challenges—“Who dares to be God?”

The Son of Man

In a series of essays published in this Journal, as well as in his books, William Patrick Patterson warned of the growing threat of AI for two decades, tracing the rapid development of technology from a different perspective. To understand the challenge we face, we must first understand technology, its essence—what it is in itself. It is not something alien but a part of us. As Patterson explains:

Technology is not us, and yet it is us. This is what makes it so difficult to understand. Aristotle spoke of the “rational animal.” Technology is man’s rational part—the logical part of our power of binary reasoning—developed to an extraordinary degree through millennia of experience and experiment. Thus, we are its parents and like all parents had no idea of what we were conceiving.

Patterson calls this newest technology the “Son of Man.” He tells us—

Our situation is this: We are born bioplasmic machines and have developed through the millennia the logical, binary part of ourselves to such an extraordinary degree that …we have ‘created’ ourselves in machine form—the ‘Son of Man,’ the ‘Robo-sapiens’…. Never before was there a rival to human intelligence—it was preeminent. Now we’ve created one—the Son of Man.

Gurdjieff teaches that human beings are machines—what Patterson calls bioplasmic machines, living, breathing, organic machines. “What is a machine? It is that which has been created to do something, to accomplish a given task or tasks. It is programmed to perform as efficiently as possible.” As human beings we are put on Earth to fulfill our mechanical purpose simply by functioning as we are now—“breathing, moving, associating, thinking—mechanically receiving, refining and transmitting energy.” Gurdjieff also tells us that as human beings we are made in the image of God. But, as Patterson explains, we are negative images, and like camera film of old, we must develop the negative into the positive.

As long as we remain undeveloped, we remain negative images, that is, machines. Developed—truly awakening to our real self—we cease to be a machine and, to the degree that this is consciously realized, become an image of God. As with developing a negative image—where is the solution, where is the light? The light and the solution is the seminal, esoteric and sacred teaching Mr. Gurdjieff brought to the West called The Fourth Way.

But in our race to develop superhuman intelligence, as we prioritize and privilege machine intelligence, we are on the path to ignoring the key component of what makes us human and gives us the possibility of spiritual transformation—the human body. As we will see, without a biological body, which is essential to spiritual transformation, AI can never be more than an intelligent machine, unconscious, incapable of knowing itself, incapable of knowing God. No technology, no matter how powerful, can ever have the knowing awareness of presence. And as we move closer and closer to its deployment, we identify even more with the technological and digital and lose our inherent spiritual possibility of self-transformation and transcendence. Patterson warns us:

Technology is not neutral. It seeks, unknowing, as it knows nothing in our sense of the word, to remake and redefine everything in its own image. Relentlessly, it is reordering how we live and think and who we take ourselves to be. It poses a direct challenge to our identity and purpose as human beings. Are we facing a kind of Faustian bargain in which for unimaginable material power we are exchanging our possibility of spiritual transformation?”

1921. R.U.R., a play by the Czech writer Karel Capek, introduced the term robot to the English language—from the Czech word, robota for “forced labor, serf,” in turn derived from rab, meaning “slave.” R.U.R.—Rossumovi Univerzální Roboti (Rossum’s Universal Robots)—became influential soon after its publication. By 1923, it had been translated into thirty languages. Though most robots are content to work for humans, eventually a rebellion causes the extinction of the human race. Above, Alquist, the last human, cannot recreate humans as requested by the robots surrounding him.

The Coming Wave

In his book The Coming Wave, Suleyman defines a technological wave as:

A set of technologies coming together around the same time, powered by one or several new general-purpose technologies with profound societal implications. By “general-purpose technologies,” I mean those that enable seismic advances in what human beings can do. Society unfolds in concert with these leaps. We see it over and over: a new technology, like the internal combustion engine, proliferates and transforms everything around it.

Suleyman argues that humankind is at the early stages of “an unprecedented moment of exponential innovation and upheaval, an unparalleled augmentation that will leave little unchanged.” He explains that a key aspect of technological waves is once they gather momentum, they rarely stop. “Mass diffusion, raw, rampant proliferation—this is technology’s historical default, the closest thing to a natural state.” As history shows, “Almost every foundational technology ever invented, from pickaxes to plows, pottery to photography, phones to planes, and everything in between follows a single, seemingly immutable law: it gets cheaper and easier to use, and ultimately it proliferates, far and wide.”

Already, AI is ubiquitous in our lives. Each day, millions of people open their phones using Face ID, take for granted speech-to-text transcription and instant language translation, use Google Maps, Waze and other map applications to guide them through city streets and country roads to their destinations. Banks rely on AI to determine who gets loans. As Suleyman notes, “Technology is now an indispensable mega-system infusing every aspect of daily life, society, and the economy. No one can do without it.” No previous wave has mushroomed as quickly, but the historical pattern nonetheless repeats. “At first [a technology] seems impossible and unimaginable. Then it appears inevitable. And each wave grows bigger and stronger still.”

The danger of the coming AI wave is that it is qualitatively different from every previous wave, including the internet, which in the period of 30 years since it was made available to the public has transformed everyday life. As such, it is “not just a deepening and acceleration of history’s pattern, …but also a sharp break from it.” We are in a unique moment, says Suleyman:

…this coming wave of technology is bringing human history to a turning point. If containing it is impossible, the consequences for our species are dramatic, potentially dire.…No one is in full control of what it does or where it goes next. This is not some far-out philosophical concept or extreme determinist scenario or wild-eyed California technocentrism. It is a basic description of the world we all inhabit, indeed the world we have inhabited for quite some time.[Emphasis added.]

Suleyman outlines four key features of the new technologies that fuel the coming wave and make it so dangerous. First, the technologies are inherently general and “omni-use”—like the steam engine or electricity, they have multiple uses and wide societal effects. Second, they “hyper-evolve, iterating, improving and branching into new areas at incredible speed.” Third, they have asymmetric impacts, allowing, for example, a single hacker in Russia to take down an electric grid in Ukraine. And fourth, they are increasingly autonomous, able to make and carry out decisions without human interaction.

The question is: does this make AI intelligent?

What Is Intelligence?

Stuart Russell, distinguished professor of computer science at UC Berkeley who has spent decades exploring human and artificial intelligence, notes that, “From the earliest beginnings of ancient Greek philosophy, the concept of intelligence had been tied to the ability to perceive, to reason and to act successfully.” [Emphasis in original.] According to Russell, “an entity is intelligent to the extent that what it does is likely to achieve what it wants, given what it perceives.” As Patterson notes, Aristotle’s focus on the “rational animal” set the tone for the next 2,000 years of Western thought and focus on rationality. In the Western world, an intelligent mind is a “rational” mind. And the rational act is one that, stipulates Russell, “according to logical deduction across the sequence of actions, ‘easily and best’ produces the end—what the person wants.” “It’s hard to overstate the importance of this conclusion,” Russell tells us, since artificial intelligence “has been mainly about working out the details of how to build rational machines.” The central concept of AI is the intelligent agent—a machine that perceives and acts rationally to achieve the desired end.

Studying the brain has been enormously challenging for modern neuroscientists. While it only weighs about three pounds and is made up “primarily of fat mixed with smaller amounts of water, salts, protein and carbohydrates,” the brain is extraordinarily complex. Max Bennett, AI entrepreneur and author of A Brief History of Intelligence, explains:

The human brain contains eighty-six billion neurons and over a hundred trillion connections. Each of those connections is so minuscule—less than thirty nanometers wide [a nanometer is one billionth of a meter]—that they can barely be seen under even the most powerful microscopes. These connections are bunched together in a tangled mess—within a single cubic millimeter (the width of a single letter on a penny), there are over one billion connections. But the sheer number of connections is only one aspect; even if scientists could map the wiring of each neuron, they would still be far from understanding how the brain works.

Bennett explains that the difficulty in understanding how the brain works is in large part because there are hundreds of different chemicals that pass across neural connections in the brain, each with different effects. The fact that two neurons connect to each other reveals little about what they are communicating. Adding to the complexity, these connections themselves are in a constant state of change, “with some neurons branching out and forming new connections, while others are retracting and removing old ones.”

Geoffrey Hinton, the 78-year-old computer scientist responsible for creating the computer vision and speech recognition technology that makes so much of today’s AI possible, originally wanted to study theories of the brain. He believed that computers should operate on neural networks as the human brain does. In the 1990s, this theory was dismissed as nonsense. Yet today the LLMs (large language models) that form the current iteration of AI chatbots like OpenAI’s GPT-4o, Google’s Gemini and Microsoft’s Copilot, are built on artificial neural networks (ANNs) modeled on the structure of the human brain as currently understood. But the speed and computational output of these LLMs far surpasses that of human beings.

As complex and extraordinary as it may be, the human brain is organic, biological. Its cognitive capacity is limited by the processing speed of neurons, the caloric limitations of the human body, and the size constraints of how big a brain can be and still fit in the carbon-based life-form that is the human body. In a contest with AI, it is at an extreme disadvantage. The average person can read about two hundred words per minute; in an eighty-year lifetime that would be about eight billion words if they did absolutely nothing else but read twenty-four hours per day. A single AI program trains on all the information available on the internet, consuming more books and articles than a single human brain could read in a thousand lifetimes. Literally trillions of words—stored in a gargantuan memory capacity.

The physical, human brain can never compete with an AI machine that can write as much text as all of humanity or compress all the pictures on the open web into a tool that generates images with creativity and precision. Having consumed every website, code block, book, blog post, and anything else obtainable on the internet, AI has literally “eaten the world.”

As Suleyman describes it: “This kind of vast, almost instantaneous consumption of information is not just difficult to comprehend—it is truly alien.” Given its speed and computational power, which far surpasses that of human beings, Israeli historian and philosopher Yuval Harari has described AI as an “alien intelligence.” But as Patterson has explained, it is not, in fact, alien. It is the “Son of Man,” our own creation, a part of us. Unfortunately, like many parents, we will never be able to understand our progeny—because it is beyond the capacity of human beings to understand.

In contrast, Gurdjieff teaches we are three-brained beings—instinctive, feeling and intellectual—each a bioplasmic brain. That is, the “[bioplasmic] machine is composed of three different brains, each operating and processing impressions at different speeds and refining different qualities of energy.” Further, Gurdjieff teaches that we have two additional higher centers, the higher intellectual and the higher emotional centers. We are capable of contacting these higher centers as we work to become conscious—but this is unknown to ordinary scientists who focus only on the brain they recognize—the intellectual brain. And their efforts to understand how intelligence in the brain operates focuses only on our Aristotelian “rationality,” what Patterson calls our logical, binary intelligence.

An Alien Intelligence?

An astonishing example of the computational power of AI took place almost a decade ago, an eternity in the AI world, and involved the ancient East Asian game of Go. Go is played on a nineteen-by-nineteen grid with black and white stones, with the simple goal of surrounding your opponent’s stones with your own and taking them off the board. It is exponentially more complex than chess. “After just three pairs of moves in chess, there are about 121 million possible configurations of the board. But after three moves in Go, there are on the order of 200 quadrillion (2 x 10 to the 15th power) possible configurations.… It’s often said that there are more potential configurations of a Go board than there are atoms in the known universe; one million trillion trillion trillion trillion more configurations in fact!”

In 2015, an AI program called AlphaGo was trained by watching 150,000 games played by human experts. Suleyman’s company, DeepMind, created multiple copies of AlphaGo and had it play itself over and over. The algorithm was able to simulate millions of new games and explore a huge range of possibilities learning new strategies in the process. In March of 2016, DeepMind organized a tournament in South Korea pitting AlphaGo against virtuoso world champion Lee Sedol. In the second game it made a move all experts thought was a mistake, but proved pivotal, and AlphaGo won the second game. According to Suleyman,

Go strategy was being rewritten before our eyes. Our AI had uncovered ideas that hadn’t occurred to the most brilliant players in thousands of years… AlphaGo went on to beat Sedol 4-1.… Later versions of the software like AlphaZero dispensed with any prior human knowledge. The system simply trained on its own, playing itself millions of times over,… without any of the received wisdom or input of human players. In other words, with just a day’s training, AlphaZero was capable of learning more about the game than the entirety of human experience could teach it. [Emphasis added]

Harari argues that the danger of AI lies in the fact it is the first tool created in history that literally takes power away from human beings. Because AI can learn by itself and improve itself, even the developers of the LLMs don’t know the full capabilities of what they have created. They are often surprised by emergent abilities and emergent qualities of these tools, as happened with the creation of AlphaGo and ChatGPT. And even more dangerous, Harari argues, is that the LLMs developers are inventing are the first tools in history that can make decisions by themselves; that is, they are no longer a tool, they are agents.

Every previous technology, from a stone knife to nuclear bombs, could not make decisions. The decision to drop the bomb on Hiroshima was not made by the atom bomb. It was made by President Truman. Similarly, every previous technology in history could only replicate our ideas. Like the radio or the printing press, they could make copies and disseminate the music or the poems or the novels that some human wrote. Now we have a technology that can create completely new ideas. And can do it at a scale far beyond what humans are capable of.

According to Harari,

When we take all of these abilities together as a package, they boil down to one very, very big thing: The ability to manipulate and to generate language, whether with words or images or sounds. The most important aspect of the current state of the ongoing AI revolution is that AI is gaining mastery of language at a level that surpasses the average human ability. And by gaining mastery of language, AI is seizing the master key, unlocking doors of all of our institutions, from banks to temples. Because language is the tool that we use to give instructions to our bank and also to inspire heavenly visions in our minds. … Another way to think of it is that AI has just hacked the operating system of human civilization.

It’s clear that those responsible for creating AI do not understand how it works any better than neuroscientists understand how the human brain functions. As Hinton said in a 2023 interview on the television show, 60 Minutes, “We designed the learning algorithm. That’s a bit like designing the principle of evolution, but then this learning algorithm interacts with data, it produces complicated neural networks that are good at doing things, and we don’t really understand exactly how they do those things.”

These artificial neural networks (ANNs) are the architecture within LLMs, the “generative” aspect of AI. They function differently each time they operate, so their outputs are never decided in advance. With all previous technologies, the creators could explain why something worked and how it did what it did, even if it required vast detail to do so. But now—

You can’t walk someone through the decision-making process to explain precisely why an algorithm produced a specific prediction. Engineers can’t peer beneath the hood and easily explain what caused something to happen. GPT-4o, AlphaGo, and the rest are black boxes, their outputs and decisions based on opaque and intricate chains of minute signals.

Despite our lack of understanding of how these technologies work, tech companies are barreling forward, ever in search of the money, power and fame these new technologies offer. And, Suleyman warns, there will be ripple effects. “Quite simply, any technology is capable of going wrong, often in ways that directly contradict its original purpose.” There is no way of predicting everything that could possibly happen with AI in the future, “what mistakes get made, what ‘revenge effects’ unfurl, what random, unforeseen mess results from technology’s collision with reality. Off the drawing board, away from the theory, that central problem of uncontained technology holds, even with the best of intentions.”

“Revenge Effects” and The Law of Seven

In his theory of “revenge effect” Suleyman has unwittingly identified the ancient, esoteric law of octaves, or Law of Seven. Mr. Gurdjieff tells us:

The line of the development of forces deviates from its original direction and goes, after a certain time, in a diametrically opposite direction, still preserving its former name.…

Nothing can develop by staying on one level. Ascent or descent is the inevitable cosmic condition of any action. We neither understand nor see what is going on around and within us, either because we do not allow for the inevitability of descent when there is no ascent or because we take descent to be ascent. These are two of the fundamental causes of our self-deception….

Upon the law of octaves depends the imperfection and incompleteness of our knowledge in all spheres without exception, chiefly because we always begin in one direction and afterwards without noticing it proceed in another.

Furthermore, in accordance with the law of octaves, nothing turns out as intended.

The principle of the discontinuity of vibration means the definite and necessary characteristic of all vibrations in nature, whether ascending or descending, to develop not uniformly but with periodical accelerations and retardations. So, rather than achieving the intended result, quite often the opposite is achieved.

Mr. Gurdjieff gives as an example the history of the Christian religion: “Think how many turns the line of development of forces must have taken to come from the Gospel preaching of love to the Inquisition.”

Suleyman, explaining his theory of “revenge effects” from technological waves, notes that, “…this is about unintended consequences from good people.… It’s about what goes wrong when powerful tools proliferate, what mistakes get made, what ‘revenge effects’ unfurl, what random, unforeseen mess results from technology’s collision with reality.” He gives several examples of revenge effects in the history of technology: how Alfred Nobel, who invented dynamite, intended the explosives to be used only in mining and railway construction; how the creators of refrigeration did not aim to produce a hole in the ozone layer with CFCs (chlorofluorocarbons); how the creators of the internal combustion and jet engines had no thought of melting ice caps and no conception of global warming. It is inevitable, he tells us—without understanding that the underlying reason is the esoteric Law of Seven—that “revenge effects” will unfold. And since everything in the universe follows the cosmic law of octaves, which at the level of the Earth is entirely mechanical, it is inevitable that AI will threaten the future of humanity in multiple ways.

1774. Parson Phillip Matthäus Hahn was a clockmaker, maker of astronomical instruments and preeminent manufacturer of calculating machines. After Hahn’s death, his two sons and brother-in-law manufactured various models of calculating machines up to 1820. By mid-17th century calculating machines, increasingly being designed and manufactured commercially throughout Europe and many other parts of the world, had become indispensable in the business world.

Existential Threats To Humanity

The dangers of AI range from: 1) the personal—intimate relationships between individual human beings and “fake people,” AI bots; 2) to the global—AI creating a metaverse in which human beings are no more important than ants or bar codes; 3) to the cosmic—a rogue AI delivers a nuclear bomb that destroys the planet. In each of these scenarios, technologies being developed to enhance human lives clearly have the possibility of being weaponized or put to harmful uses despite the intentions of those creating them. We begin with the most catastrophic—the threat of extinction.

What’s Your P(doom)

In Silicon Valley, the idea that AI poses an existential threat to human beings became so pervasive that what began as a half-serious inside joke became the hottest buzzword in the tech world late in 2023. “P(doom)” stands for the probability on a scale of 0 – 100 that artificial intelligence will cause a doomsday scenario for humanity. Rather than a precise prediction, p(doom) indicates where AI developers stand on the spectrum of whether AI will bring huge benefits versus catastrophic harm for human beings. Those with low p(doom) scores believe that “scientists and policy makers will work hard to prevent catastrophic harm before it occurs.” Those with high p(doom) scores believe “companies and governments won’t bother with good safety practices and policy measures.” In a December 2023 survey, the average p(doom) score of AI engineers was 40. Eliezer Yudkowsky, cofounder of Berkeley’s Machine Intelligence Research Institute, has said his number is higher than 95, noting that, “The designers of the RBMK-1000 reactor that exploded in Chernobyl understood the physics of nuclear reactors vastly better than anyone will understand artificial superintelligence at the point it first gets created.” Elon Musk, who has claimed to be a proponent of containing AI for many years, said on November 30, 2023, “The apocalypse could come along at any moment.” Yet he gives his p(doom) probability at 10–20%.

What are the possible existential threats to human existence from AI that fuel these concerns? The catastrophes predicted range from worldwide AI-powered cyberattacks that break down societies, to automated wars that destroy whole countries, to biological attacks with AI-manufactured pathogens or nuclear bombs that kill off humanity. How did we get to this place? Yudkowsky says it’s because “the nature of the challenge changes when you’re trying to shape something that is smarter than you for the first time. We are rushing way, way ahead of ourselves with something lethally dangerous. We are building more and more powerful systems that we understand less well as time goes on. We are in the position of needing the first rocket launch to go very well, while having only built jet planes previously. And an entire human species is loaded into the rocket.”

The Gorilla Problem

AI pioneer Stuart Russell, in his book, Human Compatible: Artificial Intelligence and the Problem of Control, explains the “gorilla problem.”

Around ten million years ago, the ancestors of the modern gorilla created (accidentally, to be sure) the genetic lineage leading to modern humans. How do the gorillas feel about this? Clearly, if they were able to tell us about their species’ current situation vis-à-vis humans, the consensus opinion would be very negative indeed. Their species has essentially no future beyond that which we deign to allow. We do not want to be in a similar situation vis-à-vis superintelligent machines. I’ll call this the gorilla problem—specifically, the problem of whether humans can maintain their supremacy and autonomy in a world that includes machines with substantially greater intelligence.

As Russell notes, “It doesn’t require much imagination to see that making something smarter than yourself could be a bad idea. We understand that our control over our environment and over other species is a result of our intelligence, so the thought of something else being more intelligent than us… immediately induces a queasy feeling.” In the same vein, Max Tegmark, AI researcher at Massachusetts Institute of Technology, comments that human beings have wiped out a significant fraction of all the species on earth, so we should expect the same to happen to us as a less intelligent species. “[I]n many cases, we have wiped out species just because we wanted resources. We chopped down rainforests because we wanted palm oil; our goals didn’t align with other species, but because we were smarter they couldn’t stop us. That could easily happen to us.”

Many of those concerned about AI safety have stressed the danger of AI with built-in algorithms to remain in operation, as the AI developers call it, “survival instinct.” Yoshua Bengio, one of the three “godfathers” of AI, describes how there is a natural tendency in AI for “intermediate goals” to appear. And, if you ask an AI system to do something, “in order to achieve that thing, it needs to survive long enough. Now, it has a survival instinct. When we create an entity that has a survival instinct, it’s like we have created a new species. Once these AI systems have a survival instinct, they might do things that can be dangerous for us.” And, Russell says, it will be impossible for humans to disable these AI machines with an “off-switch,” because the machines will know that once they are “dead,” they will not be able to complete their prescribed objective. As a result, “self-preservation,” a “survival instinct,” becomes an instrumental goal. Bengio notes that “it’s feasible to build AI systems that will not become autonomous by mishap, but even if we find a recipe for building a completely safe AI system, knowing how to do that automatically tells us how to build a dangerous, autonomous one, or one that will do the bidding of somebody with bad intentions.”

During a debate with Eric Schmidt at the Time Magazine Summit in 2024, Bengio went further, saying, “Right now scientists really have no idea how to build AI that will do what we want, that will behave according to our norms, our laws, our values. And that is a big problem.” Although Schmidt disagreed with Bengio’s warning to act cautiously, stating we [American tech companies] need to develop AI as fast as we can, he agreed that many dangers loom, including his fear that a point will come when AI “agents” can talk to each other in a language we simply cannot understand. When that happens, humanity could no longer control its own destiny.

Bengio and Schmidt also agreed the most dangerous problem is that “open source” models are being released to the public by big tech companies as soon as they develop the next, more advanced model. There is nothing to prevent these open-source models from being used or modified by bad actors. Bengio noted that once a model is shared with the world, all the safety precautions that have been put in place to ensure it cannot be used to build weapons are easily removed with very little hardware. Nevertheless, Meta, which is the parent company of Facebook, Instagram, and WhatsApp, and has billions of customers around the world, continues to open source most of the AI technology they are building, allowing anyone to use the underlying technology to build products or services for free. Other companies, including Google, are also open-sourcing their technology. The benefit, the companies claim, is that independent researchers and engineers can spot problems with the technology and help improve it.

But, as Suleyman points out,

However clever the designers, however robust the safety mechanisms, accounting for all eventualities, guaranteeing safety is impossible. Even if it was fully aligned with human interests, a sufficiently powerful AI could potentially overwrite its programming, discarding safety and alignment features apparently built in.

He notes that—

There is a strong case that by definition a superintelligence would be fully impossible to control or contain. An “intelligence explosion” is the point at which an AI can improve itself again and again, recursively making itself better in ever faster and more effective ways. Here is the definitive uncontained and uncontainable technology. The blunt truth is that nobody knows when, if, or exactly how AIs might slip beyond us and what happens next; nobody knows when or if they will become fully autonomous or how to make them behave with awareness of and alignment with our values, assuming we can settle on those values in the first place. [Emphasis added]

No matter what safeguards are put in place, we must “allow for the possibility of accident, error, malicious use, evolution beyond human control, unpredictable consequences of all kinds. At some stage, in some form, something, somewhere will fail. And this won’t be a Bhopal or even a Chernobyl; it will unfold on a worldwide scale.”

May 11, 1997. The world was astonished when IBM computer Deep Blue beat the world’s greatest chess player, Garry Kasparov, in the last game of a six-game match. This was the first defeat of a reigning world chess champion by a computer under tournament conditions. Deep Blue’s win was seen as a significant sign that artificial intelligence was catching up to human intelligence and could defeat one of humanity’s great intellectual champions.

The Demise of Human Culture

Short of catastrophic physical destruction, there is the risk that human culture, thousands of years of art, religion, philosophy, science—humanity as we currently know it—could be destroyed. Harari sees this as an existential threat, as serious as that of the catastrophic physical destruction that AI could cause, because at the base of AI’s “capacities” is its use of language. It is a truism of modern science that language is what separates human beings from other animals. Early AI developers were surprised that the computer models they were training treated all the data they were being fed as language, looking for patterns that allowed the machines to produce new content that was comprehensible—hence the term “large language models,” LLMs. Harari warns that the “intelligence” of AI boils down to its ability to manipulate and generate language, whether with words, sounds or images. Emphasizing the profound importance of language by alluding to Genesis, he says:

In the beginning was the word. Language is the operating system of human culture. From language emerges myth and law, gods and money, art and science, friendships and nations and computer code. By gaining mastery of language, A.I. is seizing the master key to civilization, from bank vaults to holy sepulchers.

And with the coming dominance of an inorganic, mechanical, unconscious machine intelligence, he fears we may lose all that has come into existence during the millennia that homo sapiens have inhabited the world. “Within a few years, AI could eat the whole of human culture, everything we produced for thousands and thousands of years, digest it, and start gushing out a flood of new cultural creations, new cultural artifacts.”

In explicating his concerns, Harari keenly recognizes that as human beings we do not have direct access to reality; instead, we are cocooned by our own culture.

For thousands of years, we humans basically lived inside the dreams and fantasies of other humans. We have worshipped gods, we pursued ideals of beauty, we dedicated our lives to causes that originated in the imagination of some human poet or prophet or politicians. … For thousands of years, prophets and poets and politicians have used language and storytelling in order to manipulate and to control people and to reshape society. Now, AI is likely to be able to do it.… Soon, we might find ourselves living inside the dreams and fantasies of an alien intelligence.

Simply by gaining mastery of language, A.I. would have all it needs to contain us in a Matrix-like world of illusions.

If this occurs, in the foreseeable future a human baby could be born into a world in which almost all of culture is non-human. Harari asks, “What would it mean for human beings to live in a world where perhaps most of the stories, melodies, images, laws, policies and tools are shaped by a nonhuman, alien intelligence, which knows how to exploit with superhuman efficiency the weaknesses, biases and addictions of the human mind, and also knows how to form deep and even intimate relationships with human beings? That’s the big question.”

Even the language itself used by these AI experts shows the extent of anthropomorphic hypnosis endemic in this field of machine algorithms. Wouldn’t Harari agree that to “form deep and even intimate relationships” includes feeling and sensing? What then do these experts mean by “human beings”?

IBM Watson is a computer system capable of answering questions posed in natural language. Watson was named after IBM’s founder and first CEO, industrialist Thomas J. Watson. In 2011, the cognitive computing system was originally the size of a master bedroom.

False Reality Becomes the Metaverse

Patterson tells us that we are already living in a false reality—like a fish living in water: “The water is the world for the fish. Born in water, lives in water, water is all it knows and will ever know.” And for us, this “water” is the “thin film of false reality” spoken of by Ouspensky in the beginning of his book, In Search of the Miraculous. Only by working to be present in the moment through the practices of self-remembering and self-observation can we break the water line, as dolphins and whales do, and experience waterless space. Then difference would be experienced. To break “the water line” of ordinary life, to separate the real from the thin veil illusion of the real, to distinguish the world of Being from that of Becoming has been spoken of in religious texts.

Increasingly, this false reality in which we live is being shaped and organized by AI, what Patterson describes as a process in which technology is “relentlessly reordering how we live and think and who we take ourselves to be.” Even before the emergence of AI, we likely experienced a moment when the structure or organization of a computer app forced us to conform our own thinking and organization to its shape and contours. Now we are moving toward a world where the majority of our daily interactions are not with other people but with AI. Consider: how much time do you spend each day in front of a screen? Every day, millions of people spend more time looking at their collective screens—computers, phones, tablets—than they do with any real human being, including their spouses and children. Teenagers and young adults spend a great deal of time losing themselves in video games and virtual reality sites when not in school or on the job. And as the technology becomes even more all-encompassing, we’ll spend more and more time talking and engaging with machines, and as Patterson warns, “being disembodied, our time, attention and energy completely eaten.” And he says, the danger is that “we allow our purpose and meaning as human beings to be defined and limited… unwittingly we will only want to become better machines.”

Along with the usurpation of human culture by AI, it is also foreseeable that we will come to live in an AI-created, computer-based virtual world—a different sort of false reality—that is being called the “metaverse,” the next version of the internet. (This metaverse is not to be confused with the virtual reality 3D platform termed metaverse invented by Facebook, now Meta). According to Forbes, “At its core, the metaverse is a virtual universe that blends various technologies, including artificial intelligence, virtual reality, augmented reality, 3D animation and blockchain. It’s more than just an effort to expand the internet; it’s a transformative shift in how we perceive and engage with the digital world.” Merriam-Webster Dictionary defines the metaverse as “a persistent virtual environment that allows access to and interoperability of multiple individual virtual realities.” Although there currently is no unified metaverse, “it exists as a digital ecosystem built on various kinds of virtual 3D technology, real time collaboration software and blockchain-based decentralized finance tools.” McKinsey & Company predicts the metaverse is an economic goldmine that could reach $5 trillion by 2030.

New York Times columnist Ezra Klein has raised the specter of massive unemployment as another contributor to this slide into a virtual metaverse. Klein notes that, “In a world where people lose their economic utility, it’s easy to imagine them retreating into virtual reality.” He imagines a possible future where those in power try to manage the problem of economic irrelevance through a massive societal distraction machine, what he describes as “some kind of nonproductive, hyper-pleasure scenario.” Without a job, assuming the government provides you with universal basic income, what do you do all day? How do you find meaning in life? Right now, the answer is “drugs and computer games. People will regulate more and more their moods with all kinds of biochemicals, and they will engage more and more with three-dimensional virtual realities.”

Patterson warned about this in his 2009 book, Spiritual Survival in a Radically Changing World-Time:

The danger, then, is not from Technology in itself, but rather the part of us that so identifies with Technology that we allow our purpose and meaning as human beings to be defined and limited … so that, unwittingly, we will only want to become better machines. If so, then this part and Technology will have mastered us, and we won’t even know it. It will seem natural that we exist merely as bioplasmic machines, a form of worker ants, each stamped with a barcode, totally enframed in a sterile, mentalized-technologized world. Such a machine world, running on its “oil”—water to keep it cool and electricity for power—would impoverish all humanity. The human experiment on Earth would be over—a human and a spiritual catastrophe, a binary hell world, from which there would be no return.

Patterson goes on to say—

As Technology and the technological mind-set increase their domination of human activity, we, as bioplasmic machines, lose our possible beingness and become automatons.

A general purpose advanced humanoid robotic worker that can interact with its environment and safely work alongside people. It’s able to use AI to learn new tasks and perform useful jobs using human-like manipulation skills. The focus of interest is what it can do with its “body”—it has no has no face to personalize it for its human coworkers.

Have We Forgotten The Body?

At Carnegie Mellon University, the research group known as REAL—which stands for Robotics, Embodied AI, and Learning—is a part of the School of Computer Science. On its home webpage, REAL defines “embodied AI” as the “integration of machine learning, computer vision, robot learning and language technologies, culminating in the “embodiment” of artificial intelligence.

For those in the Gurdjieff Work, the irony of the term “embodied AI” is inescapable. The importance of embodiment in self-transformation cannot be over-emphasized. Gurdjieff tells us: “Everything begins with the body.” And Patterson, in his introduction to Spiritual Survival in a Radically Changing World-Time, says that embodiment is the “foundation of spiritual self-transformation.” A key aspect of working properly is that we understand what being embodied really means and how this relates to Gurdjieff’s practices of self-remembering and self-observation. These practices require “a conscious awareness of the body, of being embodied, of being connected with what is happening internally, as well as what is happening externally.” This begins with the activation of self-sensing, which is the very ground of self-remembering.

The esoteric secret, the very key to self-transformation, is the body—becoming embodied. “Just when did we in the West begin to separate the body from the mind?” asks Patterson. He then answers: “With Plato.”

Listen to Patterson’s explication.

Plato divided the sensuous, the body, from the supersensuous, the archetypal realm of Ideas. For him, only the archetypal was real; the body, all physical life, was unreal, a mere shadow of the Ideas. Aristotle spoke of man as the “rational animal.” We are two natured. One animal, the other rational. The body needs food and sex, movement and breath. The mind works with symbols and ideas, organizes, adjudicates, speculates. Despite, or perhaps because of, Plato’s and Aristotle’s intellectual genius, they appear to have had a bias against the body, the “animal.” They did not recognize the importance of the physical body as the ground of all higher bodies. Christianity took another step. The body was sinful and had to be purged of its fleshly desires. Then Descartes—“I think therefore I am.” Only mind has value. (Though he would later include the body as sensations, impulses, etc., in the word think.) This, of course, is all based on Aristotle’s definition of man as a “rational animal”; his division of man is not only dualistic but denies the “animal” its crucial place in the self-transformation of consciousness, which entails the development of higher bodies of being.

It is not surprising, then, that the esoteric valuation of the physical body as the foundation of spiritual self-transformation has either been not known or forgotten. Even today the body as the root spiritual praxis of self-transformation and transcendence remains largely unknown.

Technology cannot know itself; it is dumb to itself. Only we can know it, know its essence, its whatness, for we have the capability of a possible degree of self-knowing that no computation [computer] no matter how powerful, can ever know—the knowing awareness of presence.

The deeper challenge and opportunity that Technology, the “Son of Man,” presents then is that the gift of its reflection returns us anew to the primordial question that has always been at the center of our being—Who am I? What does it mean to be a human being? It is for each of us to answer this question for him- or herself, or suffer the consequences of being defined by the part and not the whole of us, the part that has produced the “Son of Man.”

Mr. Gurdjieff Brings Us the Key to This Question

Gurdjieff teaches we are three-brained beings—instinctive, feeling and intellectual—each a bioplasmic brain. That is, the “[bioplasmic] machine is composed of three different brains, each operating and processing impressions at different speeds and refining different qualities of energy.” Further, Gurdjieff teaches that we have two additional higher centers, the higher intellectual and the higher emotional centers. We are capable of contacting these higher centers as we work to become conscious—but this is unknown to ordinary scientists who focus only on the brain they recognize—the intellectual brain. And their efforts to understand how intelligence in the brain operates focus only on our Aristotelian “rationality,” what Patterson calls our logical, binary intelligence.

The teaching of the Fourth Way that Mr. Gurdjieff brings reveals that human intelligence is three-centered, not just intellectual. ![]()

— Claire Levitan

Part II will be published in the next issue.

Notes

- Technology cannot know itself. William Patrick Patterson, Spiritual Survival in a Radically Changing World Time, (Fairfax, CA: Arete Communications, 2009) 7.

- AGI, artificial general intelligence. Sometimes referred to as artificial superintelligence or superhuman machine intelligence, AGI should not be confused with Generative AI, the term used for the current AI that everyone who does a Google search or uses Microsoft Copilot and ChatGPT is now familiar with. Even this technology is beyond the understanding of its developers, who do not know its full capabilities and cannot predict what it will invent.

- Technology is not us. Patterson, 7.

- The chatbot could draft. ChatGPT-4 could also build a working website from a hand-drawn sketch; understand images, analyze objects in a photo or perceive the context of an image, for example, by planning a meal from a snapshot of the contents of a refrigerator. It could create computer code from voice or text commands.

- AI developers are locked. Cade Metz and Gregory Schmidt, “Elon Musk and Others Call for a Pause on A.I., Citing ‘Profound Risks to Society.’” New York Times, March 29, 2023. Several days later, nineteen current and past leaders of the Association for the Advancement of AI, a 40-year-old organization, signed a letter issuing their own warnings about the risks of AI. Cade Metz, “The Godfather of AI Leaves Google and Warns of Danger Ahead” New York Times, May 1, 2023.

- One of the godfathers of AI. Jennifer Korn, “AI Pioneer Quits Google to Warn about the Technologies ‘Dangers.’” CNN, May 1, 2023. (https://www.cnn.com/2023/05/01/tech/geoffrey-hinton-leaves-google-ai-fears/index.html).

- Mitigating the risk. Aaron Gregg, Cristiano Lima, Gerrit De Vynck, “AI Poses ‘Risk of Extinction’ on Par with Nukes, Tech Leaders Say,” Washington Post, May 30, 2023, (https://www.washingtonpost.com/business/2023/05/30/ai-poses-risk-extinction-industry-leaders-warn/).

- Human beings are. David Brooks, “Human Beings Are Soon Going to Be Eclipsed.” New York Times, July 13, 2023, (https://www.nytimes.com/2023/07/13/opinion/ai-chatgpt-consciousness-hofstadte r.html).

- The voice of GPT-4o. “Introducing GPT-4o”, OpenAI, May 13, 2024, (https://www.youtube.com/watch?v=DQacCB9tDaw&ab_channel=OpenAI). Barely a year later it could understand speech and respond with speech without having to first transcribe text, translate 50 languages, generate accurate, realistic images from speech or visual prompts, reason and solve complex math problems.

- This is how an AI sees it. Mustafa Suleyman, The Coming Wave: Technology, Power, and the Twenty-first Century’s Greatest Dilemma (Crown: Kindle Edition, 2023), 16. Suleyman, a British artificial intelligence entrepreneur, is the CEO of Microsoft AI, and co-founder and former head of applied AI at DeepMind in 2022, an AI company acquired by Google. After leaving DeepMind, he co-founded Inflection AI, a machine learning and generative AI company.

- Technology is not us. William Patrick Patterson, Georgi Ivanovitch Gurdjieff: The Man, The Teaching, His Mission [GIG] (Fairfax, CA. Arete Communications, 2014) 494.

- Our situation is this. Patterson, GIG, 476.

- What is a machine? Patterson, “Images of God or Machines,” The Gurdjieff Journal, #4, 3.

- We are put on Earth to fulfill our mechanical purpose. P.D. Ouspensky, A Record of Meetings, (London: Penguin, 1992) 121. “Man, and even mankind, does not exist separately, but only as part of the whole of organic life. Organic life is like a sensitive film round the earth that transfers influences from the sun and the planets to the earth and the moon, and from the earth and the moon to planets and to the sun. If there were no organic life, they could not communicate so well. So it is like a radio station. Individual man is a highly specialized cell in it which has the possibility of further development. But this development depends on his own efforts and understanding.”

- As human beings. Patterson, “The Deep Question of Energy,” The Gurdjieff Journal #45, 11.

- As long as we remain. Patterson, GIG, 570.

- No technology. Patterson, Spiritual Survival, 6.

- Technology is not neutral. Patterson, Spiritual Survival, 5.

- A set of technologies. Suleyman, 40.

- An unprecedented moment. Suleyman, 21.

- Mass diffusion. Suleyman, The Coming Wave, 50.

- Almost every foundational technology. Suleyman, 19.

- Technology is now an indispensable. Suleyman, 183.

- At first [a technology] seems impossible. Suleyman, 50.

- Not just a deepening. Suleyman, 134.

- This coming wave. Suleyman, 21, 183.

- Omni-use. Suleyman, 137–38.

- Earliest beginnings. Stuart Russell, Human Compatible, Artificial Intelligence and the Problem of Control, (New York: Penguin, 2019), 20.

- An entity is intelligent. Russell, 14.

- According to logical deduction. Russell, 20–21.

- It’s hard to overstate. Russell, 23.

- Primarily of fat. David Von Drehle, “The age of discovery is only beginning: New brain research is a sign of how little we understand of ourselves, our universe and our place in it,” The Washington Post, March 12, 2024, (https://www.washingtonpost.com/opinions/2024/05/12/brain-diagram-cellular-mysteries-life-discovery/).

- Human brain. Max Bennett, A Brief History of Intelligence, Evolution, AI, and the Five Breakthroughs That Made Our Brains (Boston, Mariner Books, 2023), 4–5.

- This complexity. Bennett, 5.

- Cognitive capacity is limited. Bennett, 363.

- Average person can read. Suleyman, 87.

- Single AI program. Bennett, 345, 416.

- Eaten the world. Suleyman, 82.

- Vast, almost instantaneous. Suleyman, 80.

- Alien intelligence. Yuval Harari, “AI Has Hacked the Operating System of Human Civilization,” The Economist, April 28, 2023. (https://economist.com/byinvitation/2023/04/28/yuval-noah-harari-argues-that-ai-has-hacked-the-operating-system-of-human-civilization?). Harari is an Israeli historian, philosopher, a professor in the Department of History at the Hebrew University in Jerusalem and the author of Sapiens: A Brief History of Humankind (2011), Homo Deus: A Brief History of Tomorrow (2015), and Nexus (2024).

- Bioplasmic machine. Patterson, Images, 3.

- Three pairs. Suleyman, 71–72.

- DeepMind organized. Suleyman, 72.

- Go Strategy. Suleyman, 73–74.

- Emergent abilities. Yuval Harari, AI and the future of humanity, “Frontiers Forum Live,” 2023 Conference, (https://www.youtube.com/watch?v=azwt2pxn3UI). Internal research on GPT-4 concluded that it was “probably” not capable of acting autonomously or self-replicating, but within days of launch users had found ways of getting the system to ask for its own documentation and to write scripts for copying itself and taking over other machines. Early research even claimed to find “sparks of AGI” (artificial general intelligence) in the model, adding that it was “strikingly close to human-level performance.”

- Every previous technology. Harari and Suleyman, “What Does the AI Revolution Mean for Our Future?” The Economist, September 17, 2023, (https://www.youtube.com/watch?v=7JkPWHr7sTY).

- All of these abilities. Harari, “Frontiers Forum Live” 2023 conference, AI and the Futures of Humanity, (https://singjupost.com/transcript-ai-and-the-future-of-humanity-yuval-noah-harari/?singlepag=1).

- We designed the learning. “Godfather of AI” Geoffrey Hinton: The 60 Minutes Interview, October 9, 2023 (https://www.youtube.com/watch?v=qrvK_KuIeJk).

- They function differently. Suleyman, 149.

- You can’t walk. Suleyman, 222.

- Quite simply. Suleyman, 53.

- What mistakes get made. Suleyman, 222.

- The line of the development. Ouspensky, 129–130.

- The principle of the discontinuity. Ouspensky, 129

- Think how many turns. Ouspensky, 129.

- About unintended consequences. Suleyman, 222.

- Examples of revenge effects. Suleyman, 52.

- Those with low p(doom). Kevin Roose, “Silicon Valley Confronts a Grim New AI Metric,” Dec. 6, 2023. (https://www.nytimes.com/2023/12/06/business/dealbook/silicon-valley-artificial-intelligence.html).

- Those with high p(doom). Roose, “Grim New AI Metric.”

- Designers of the RBMK-1000. Eliezer Yudkowsky, December 3, 2023, (https://x.com/ESYudkowsky/status/1731391379652399151).

- Elon Musk has claimed. He declares that AI is an existential threat even as he is racing to develop his own generative AI while trying to control the very system that would regulate the development of AI more broadly.

- The apocalypse could come. Maureen Dowd, “Sam Altman: Sugarcoating the Apocalypse,” New York Times, Dec. 2, 2023. (https://www.nytimes.com/2023/12/02/opinion/ai-sam-altman-openai.html).

- He gives his p(doom). “List of p(doom) Values” Pause AI, (https://pauseai.info/pdoom).

- Challenge. Steve Rose, “Five ways AI might destroy the world: ‘Everyone on Earth could fall over dead in the same second.’” The Guardian, July 7, 2023, (https://www.theguardian.com/technology/2023/jul/07/five-ways-ai-might-destroy-the-world-everyone-on-earth-could-fall-over-dead-in-the-same-second.)

- Ten million years ago. Russell, 132–33. Gurdjieff does not adhere to Darwin’s theory of evolution. Nevertheless, Russell’s analogy about the dangers posed by the invention of something smarter than we are is apt.

- It doesn’t require much imagination. Russell, 132.

- We have wiped out. Rose.

- In order to achieve. Rose.

- It’s feasible to build. Rose.

- Right now scientists. Time Magazine Summit, April 24, 2024, Eric Schmidt and Yoshua Bengio Debate How Much A.I. Should Scare Us, (https://www.youtube.com/watch?v=5LgDUqCbBwo.

- All the safety precautions. Schmidt & Bengio, Time Magazine Summit.

- Companies claim. Michael Isaac and Cade Metz, “Meta, in Its Biggest A.I. Push, Places Smart Assistants Across Its Apps,” New York Times, April 18, 2024, (https://www.nytimes.com/2024/04/18/technology/meta-ai-assistant-push.html).

- However clever the designers. Suleyman, 262.

- A strong case. Suleyman, 150.

- Allow for the possibility of accident. Suleyman, 265.

- A truism of modern science. Linguist Noam Chomsky posits that humans have an innate facility for language built into the structure of the human brain. (https://iep.utm.edu/Chomsky-philosophy/).

- In the beginning. Yuval Harari, Tristan Harris and Aza Raskin, “You Can Have the Blue Pill or the Red Pill, and We’re Out of Blue Pills,” New York Times, March 24, 2023. (https://www.nytimes.com/2023/03/24/opinion/yuval-harari-ai-chatgpt.html).

- Within a few years. Harari, “Blue Pill.”

- For thousands of years. Harari, “Blue Pill.”

- What would it mean. Harari, Frontiers Forum.

- The water is the world. Patterson, Spiritual Survival, 12.

- Thin film of false reality. Ouspensky, 4.

- Break the water line. Patterson, Spiritual Survival, 16.

- Relentlessly, [technology] is reordering. Patterson, Spiritual Survival, 5.

- Being disembodied. Patterson, “Human Beings—An Endangered Species,” The Gurdjieff Journal #66, 25.

- We allow our purpose. Patterson, Spiritual Survival, 6.

- At its core, the metaverse. Cristian Randieri, “The Metaverse: Shaping The Future Of The Internet And Business Through AI Integration,” Forbes Council Post, September 18, 2023, (https://www.forbes.com/sites/forbestechcouncil /2023/09/18/the-metaverse-shaping-the-future-of-the-internet-and-business-through-ai-integration/).

- “Metaverse.” Merriam-Webster.com Dictionary, (https://www.merriamwebster.com/ dictionary/metaverse). “Virtual reality” is defined by Merriam-Webster as “an artificial environment which is experienced through sensory stimuli (such as sights and sounds) provided by a computer and in which one’s actions partially determine what happens in the environment”]. The second definition is narrower, “Metaverses are immersive three-dimensional virtual worlds in which people interact as avatars with each other and with software agents, using the metaphor of the real world but without its physical limitations.”

- Exists as a digital ecosystem. Linda Tucci, “What is the metaverse? An explanation and in-depth guide,” TechTarget: What Is, March 22, 2024, (https://www.techtarget.com/whatis/feature/).The-metaverse-explained-Everything-you-need-to-know.

- McKinsey & Company. “Value Creation in the Metaverse: The Real Business of the Virtual World,” June 2022, (https://www.mckinsey.com/capabilities/growth-marketing-and-sales/our-insights/value-creation-in-the-metaverse).

- In a world where people lose. Ezra Klein, “Yuval Harari on why humans won’t dominate Earth in 300 years” Vox, March 27, 2017, (https://www.vox.com/2017/3/27/14780114/yuval-harari-ai-vr-consciousness-sapiens-homo-deus-podcast).

- Drugs and computer games. Klein, “Yuval Harari…on why.” Vox.

- The danger. Patterson, Spiritual Survival, 6.

- Technology and the technological. Patterson, Spiritual Survival, 7.

- On its home webpage. Carnegie Mellon University, (https://www.cmu.edu/real/).

- Foundation of spiritual self-transformation. Patterson, Spiritual Survival, 19.

- A conscious awareness. Patterson, Spiritual Survival, 13.

- Begin to separate the body from the mind. Patterson, Spiritual Survival, 20.

- Plato divided. Patterson, Spiritual Survival, 20, 7.

- The bioplasmic machine is composed of. Patterson, Spiritual Survival, 28.